Overview

The core of a DevOps pipeline consists of the following: continuousintegration/continuous delivery (CI/CD), continuous testing (CT), continuous deployment,continuous monitoring, continuous feedback/evolution, and continuous operations.

This whitepaper provides insight into what these concepts meanand how they serve as building blocks for DevOps.

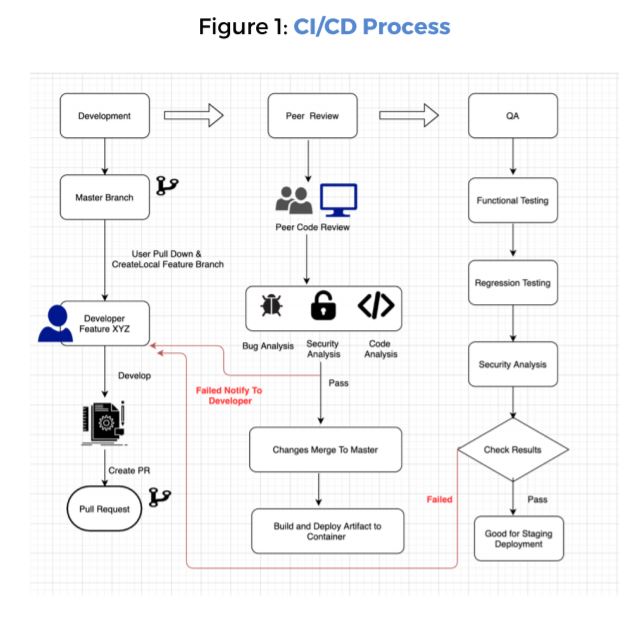

Continuous Integration & Delivery

Before continuous integration (CI) was in place, developers built the applicationfeatures in silos and submitted them separately. The concept of CI has completelychanged how developers go about sharing their code changes with the masterbranch. With CI, the system frequently integrates the code changes into a centralrepository several times a day.

As a result, merging the different code changes becomes easier and is also lesstimeconsuming.You’ll also encounter integration bugs early, and the sooner you spotthem, the easier it is to work on resolving them.

Continuous delivery (CD) is about incremental delivery of updates/software toproduction. While serving as an extension of CI, CD enables you to automate yourentire software release operation. It allows you to look beyond just the unit tests andperform other tests such as integration tests and UI tests.

As a result, the developers can perform a more comprehensive validation on updatesto ensure bug-free deployment. With CD in place, you increase the frequency ofreleasing new features. Consequently, it enhances the customer feedback loop,thereby creating the opportunity for better customer involvement.

Thus, CI/CD serve as linchpins to any DevOps pipeline

Continuous Testing & Deployment

Continuous Testing

- Continuous testing (CT) is another key component of a DevOps pipeline. Withcontinuous testing, you can perform automated tests on the code integrationsaccumulated during the continuous integration phase.

- Besides ensuring high-quality application development, continuous testing alsoevaluates the release’s risks before it proceeds to the delivery pipeline.Barringthe script development part, continuous testing doesn’t require any othermanual intervention.

- Testers write the test scripts before the commencement of coding. As a result,once the code integration happens, the tests begin to run one after the otherautomatically.

- Automating tests is not always straightforward. It can be a messy business, andittakes time to learn how to do it effectively. Despite the difficulty andinvestmentrequired in getting up to speed on automated testing, it’s well worth it.

- Teams that know their complete suite of automatically executed tests haveadvantages over those without. Such teams know their tests will notify them ofproblems. They feel comfortable making changes. They proceed with confidence.

Continuous Deployment

- There’s an element of ambiguity when people talk about continuous delivery andcontinuous deployment. People often interchange the two terms althoughthere’s a substantial difference between them.

- Continuous deployment succeeds continuous delivery, and the updates thatsuccessfully pass through the automated testing are released into productionautomatically. As a result, it enables multiple production deployments in asingleday.

- While the goal of continuous delivery is to make your software ready for itsrelease instantly, the actual job of pushing it into production is manual.That’swhere continuous deployment comes into the picture.

- And, as mentioned earlier, if the updates can be deployed, they’ll be deployedautomatically through continuous deployment.

Best Practices: Continuous Deployments

Continuous Development Strategies

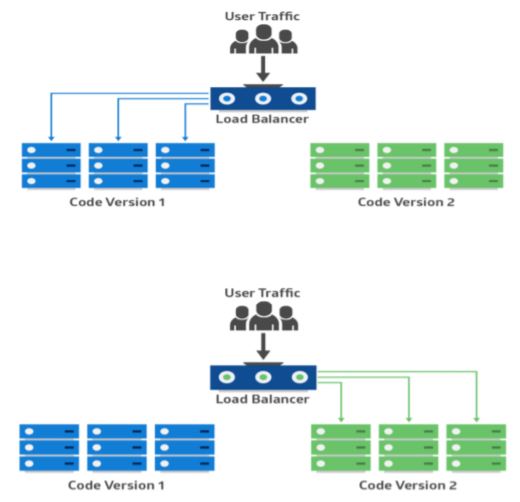

Blue/Green Or Red/Black Deployment:

- This is another fail-safe process. In this method, two identical productionenvironments work in parallel. One is the currently-running production environment receiving all user traffic (depicted as Blue). The other is a clone of it, butidle(Green). Both use the same database back-end and app configuration:

- The new version of the application isdeployed in the green environmentand tested for functionality andperformance. Once the testing resultsare successful, application traffic isrouted from blue to green. Green thenbecomes the new production.

- there is an issue after green becomes live, traffic can be routed back to blue.

- In a blue-green deployment, both systems use the same persistence layer ordatabase back end. It’s essential to keep the application data in sync, but amirrored database can help achieve that.

- You can use the primary database by blue for write operations and use thesecondary by green for read operations. During switchover from blue to green,thedatabase is failed over from primary to secondary. If green also needs to writedataduring testing, the databases can be in bidirectional replication.

- Once green becomes live, you can shut down or recycle the old blue instances.Youmight deploy a newer version on those instances and make them the new greenfor the next release.

- Blue-green deployments rely on traffic routing. This can be done by updating DNSCNAMES for hosts. However, long TTL values can delay these changes.Alternatively, you can change the load balancer settings so the changes takeeffectimmediately. Features like connection draining in ELB can be used to serveinflightconnections.

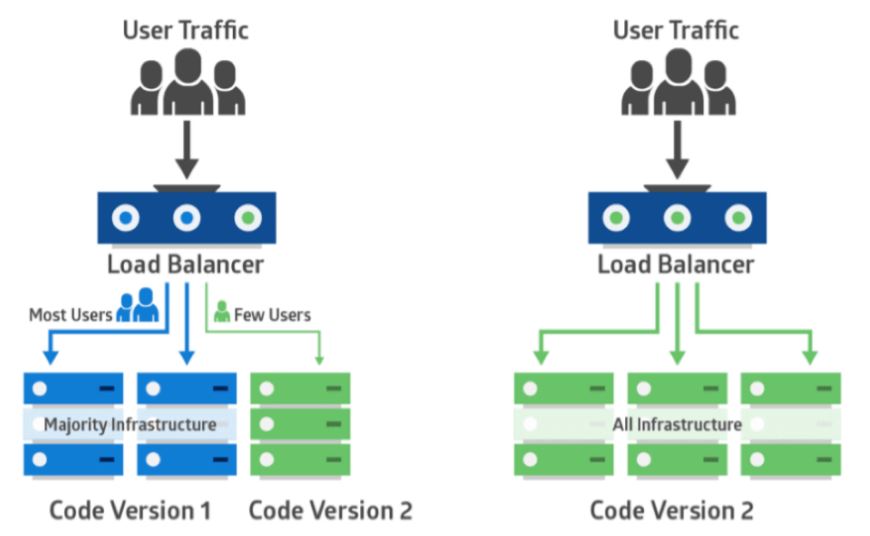

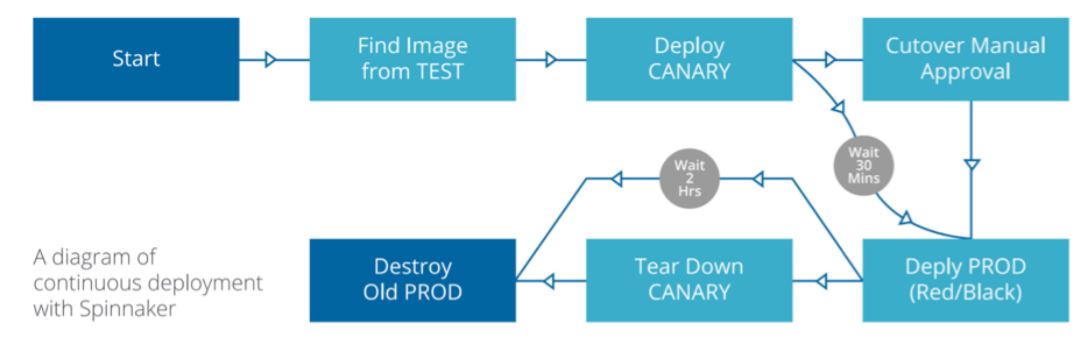

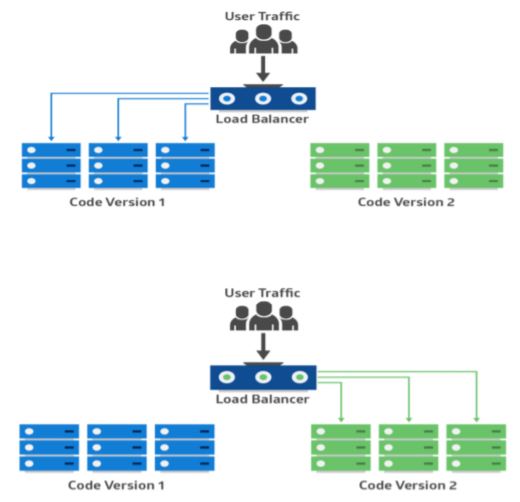

Canary Deployment:

- Canary deployment is like blue-green, except it’s more risk-averse. Instead ofswitching from blue to green in one step, you use a phased approach.

- With canary deployment, you deploy a new application code in a small partof the production infrastructure. Once the application is signed off forrelease, only a few users are routed to it. This minimizes any impact.

- With no errors reported, the new version can gradually roll out to the rest ofthe infrastructure. The image below demonstrates canary deployment:

- The main challenge of canary deployment is to devise a way to route someusers to the new application. Also, some applications may always need thesame group of users for testing, while others may require a different groupevery time.

Best Practices: Continuous Deployment Pipeline

Continuous Monitoring

Monitoring your systems and environment is crucial to ensureoptimal applicationperformance.

- In the production environment, the operations team leverages continuousmonitoring to validate if the environment is stable and that the applications dowhat they’re supposed to do.

- Rather than monitoring only their systems, DevOps encourages them to monitorapplications too. With continuous monitoring in place, you can continuously keepa tab on your application performance.

- The data thus gathered from monitoring application performance and issues canbe used to discover trends and also identify areas of improvement.

- In order to successfully implement CI/CD, monitoring is crucial. As a startingpoint,organizations need both control and visibility into their DevOps environment bycollecting and instrumenting everything.

- Considering the amounts of data, this can be an insurmountable challenge formostorganizations. Continuous monitoring of your entire DevOps life cycle willensuredevelopment and operations teams collaborate to optimize the user experienceeverystepof the way, leaving more time for your next big innovation.

As a part of continuous monitoring we should be doingfollowing three things:

Logging:

- We use logging to represent state transformations within an application. Whenthings go wrong, we need logs to establish what change in state caused theerror.

- But the problem is that obtaining, transferring, storing and parsing logs isexpensive. Because of this it is crucial to only log what is necessary; onlylogsthat can be acted upon should be stored. Log only actionable information.

Tracing:

- Tracing is another major component of monitoring, and it’s becoming evenmore useful in microservice architectures. A few of the previous resources inthelogging section covered tracing and often suggested using correlation IDs fortracing transactions through different parts of your microservices architecture.

- When it comes to front-end tracing, you’ll have to use browser tools. Googlehasexcellent documentation on how to trace performance issues in Chrome usingits DevTools suite.

Instrumentation & Monitoring:

- Instrumenting an application and monitoring the results represents the use of asystem. It is most often used for diagnostic purposes. For example we would usemonitoring systems to alert developers when the system is not operating“normally”.

- Instrumentation tends to be very cheap to compute. Metrics take nanosecondsto update and some monitoring systems operate on a “pull” model, whichmeans that the service is not affected by monitoring load.

- Generally the more data you have, the more useful monitoring becomes.

- So typically you would want to instrument all of your services. But make sureyou pick a simple, scalable monitoring system.

- During monitoring, Alerting is also important one where monitoring systemshould be able to generate proper and meaningful alerts.

Monitoring Sub-Levels

DevOps monitoring can be done at six different levels

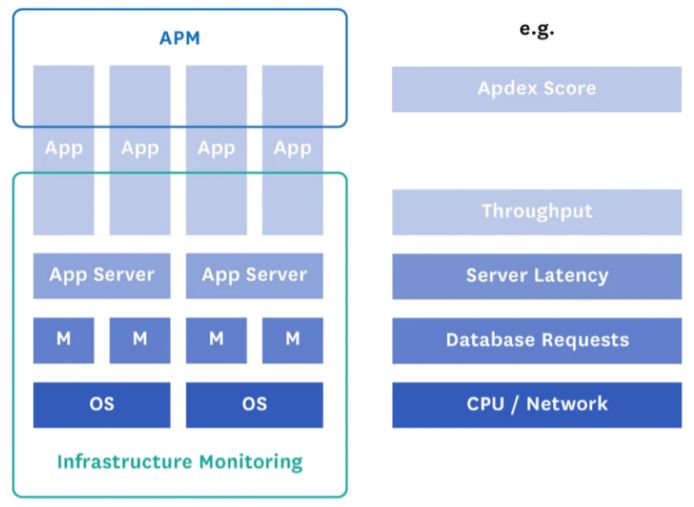

Application Performance Monitoring(APM):

This is the process of monitoring the backend architecture of an application to resolveperformance issues and bottlenecks on time.

The APM methodology works in three phases:

- Identifying the Problem:This phase involves proactively monitoring an application for issues before a problem actuallyoccurs. For this, a number of toolsare used to discover problems atthe infrastructure and applicationlevel, which includes userexperience monitoring, syntheticmonitoring wherein the userinteractions are synthesized tounveil the problems.

- Isolating the Problem:Once theproblems are identified, they mustbe isolated from the environmentto ensure that they do not impactthe entire environment.

- Solving Problem by Diagnosing theCause:Once the problem isdetected and isolated, it isdiagnosed at code-level tounderstand the cause of theproblem and fix it.

Network Performance Monitoring:

It’s the practice of consistentlychecking a network for deficiencies orfailure to ensure continued networkperformance. This may includemonitoring network components suchas servers, routers, firewalls, etc. If anyof these components slows down orfails, network administrators arenotified about the same, ensuring thatany network outrage is avoided.

Infrastructure Monitoring:

Infrastructure monitoring verifiesavailability of IT infra components in adata center or on cloud infrastructure(IaaS). This involves monitoring theresources, their availability, checkingunder-utilized and over-utilizedresources to optimize IT infra andoperational cost associated with it.

Database Performance Monitoring:

- By monitoring the database, it ispossible to track performance,security, backup, file growth of theDB. The main goal of databasemonitoring is to examine how a DBserver- both hardware and software are performing.

- This can include taking regularsnapshots of performance indicatorsthat help in determining the exacttime at which a problem occurred.When DBAs can examine the timewhen a problem occurred, it’spossible for them to figure out thepossible reason for it as well.

API Monitoring

API monitoring is the practice of examining applications’ APIs, usually in aproduction environment. It gives visibility ofperformance, availability, andfunctional correctness of APIs, which may include factors like the number ofhits on an API, where an API is called from, how an API responds to the userrequest (time spent on executing a transaction), keep a track of poorlyperforming APIs, etc.

Continuous Feedback & Operations

Continuous Feedback/Evolution:

- People often overlook continuous feedback in a DevOps pipeline, and itdoesn’t get as much limelight as the other components. However, continuousfeedback is equally valuable.

- In fact, the purpose of continuous feedback resonates very well with one of thecore DevOps goals—product improvement through customers’/stakeholders’ feedback

- Merely delivering your applications faster doesn’t equate to successfulbusiness outcomes or increased end-user satisfaction. You’ll have to ensurethat you and your end users are on the same page with your releases.

- That’s exactly what continuous feedback can help you do, and that’s why it’san important DevOps component.

Continuous Operations:

- Continuous operations is a relatively newer concept. According to Gartner,continuous operations is defined as “Those characteristics of a dataprocessingsystem that reduce or eliminate the need for planned downtime,such as scheduled maintenance.

- One element of 24-hours-day, seven-day-a-week operation.” The goal ofcontinuous operations is to effectively manage hardware as well as softwarechanges so that there’s only minimal interruption to the end users. Setting upcontinuous operations in your DevOps pipeline will cost you a lot. However,considering the massive advantage that it brings to the table— minimizingcore systems’ unavailability—shelling out a lot of money for it will probably bejustified in the long run.

In Conclusion

“Continuous Everything” serves as the backbone for modern dayDevOps.

By adopting this methodology to the different phases of developmentoperations within agile, your company can achieve its’ goals.

Contact Inventive-IT for a complimentary assessment to see how the”Continuous Everything” philosophy can be applied to your business.

Schedule your Assessment Today